Engineering Insights

The Real Reason Your Planning System Slows Down

AUTHOR

Aditya Jaroli & Pradeep Vijayakumar

The Real Reason Your Planning System Slows Down

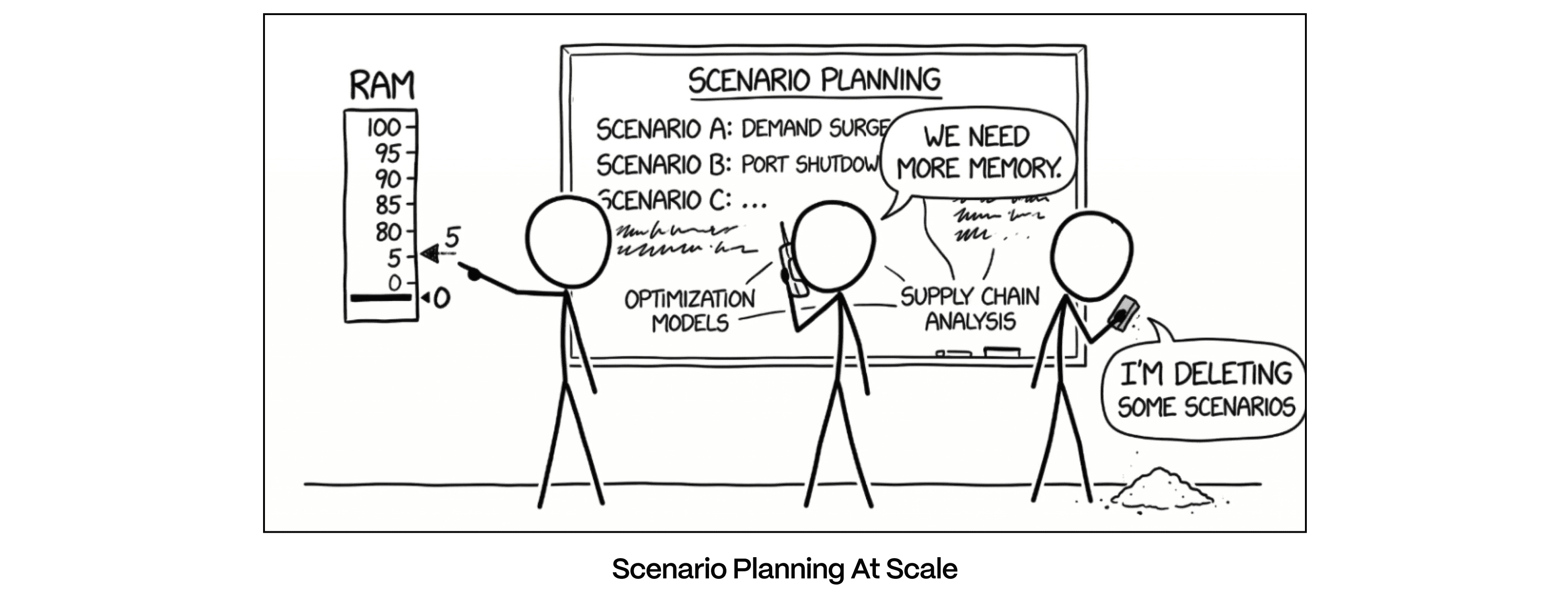

Every supply chain planning team eventually hits the same wall. The models get bigger. The scenarios multiply. The user base grows. One day, the system that used to return answers in seconds starts choking. The models outgrew the foundation.

Most teams assume it's a data problem, a model problem, or a resource problem. Usually it's the architecture.

The Problem: Planning Systems Were Built for a Smaller World

Most legacy planning and APM tools share a common architectural assumption: everything needs to live in memory. The entire working dataset — every SKU, every location, every time bucket — must fit in RAM for the system to perform.

This is what we call the in-memory tax.

When your dataset is small, you don't notice it. But as planning models grow — more granularity, more scenarios, more users — the tax comes due. You either pay enormous infrastructure costs to keep adding RAM, or performance degrades. Queries slow down. Scenario creation stalls. Users start waiting. Waiting kills decisions.

The in-memory tax limits model size, scenario count, user concurrency, and decision velocity. The infrastructure bill is just where it shows up first.

A Different Foundation: Two-Layer Architecture

The alternative decouples performance from memory size entirely. Instead of forcing everything into RAM, it uses two purpose-built layers working in concert.

Storage Layer: the durable home for all master data, built on a high-performance open table format designed for large-scale analytical workloads. The source of truth, always consistent, always complete.

Cache Layer: where speed lives. A disk-based columnar store with vectorized execution, it delivers sub-second query performance without requiring the dataset to fit in memory. When an application is built and deployed, only the tables relevant to that application are selectively loaded into the Cache Layer, not the entire dataset.

Between the Cache and the application sits the Semantic Layer, and it's doing more work than the name suggests. Every column in the data model is explicitly categorized as either an attribute (a dimension you slice and filter by) or a measure (a value you compute on). That categorization governs what gets loaded into Cache, how queries are structured, and what's exposed to the user. By the time someone opens the application, the data is already hot, pre-positioned and scoped for instant response, not pulled on demand.

Large workloads that would have crashed or stalled legacy systems run cleanly here.

The memory constraint is gone; the performance isn't.

Scenarios at Scale: Where Legacy Systems Break First

Scenario planning is where the in-memory tax hits hardest. Every new scenario in a traditional system is essentially a full copy of the dataset sitting in RAM. Five scenarios? Manageable. Fifty? You're burning through infrastructure. Five hundred? Forget it.

A modern architecture handles this through a hybrid copy strategy that plays to the strengths of each layer. In the Cache Layer, only the tables the application actually uses are physically copied from source to target scenario, strictly scoped to the application's footprint. In the Storage Layer, a zero-copy clone is created across the full dataset, nearly instantaneous and costing virtually no additional storage. Tables are shared between scenarios until an actual write occurs.

Scenario creation is fast and cheap. Edits in one scenario never affect another. The full dataset is always protected. Cache holds only what the app needs; Storage maintains the complete snapshot.

Multi-User Load Without the Shared Memory Problem

When dozens or hundreds of users are working simultaneously across scenarios, most systems start to buckle. The architecture prevents this through scenario-partitioned storage.

Every table in both Cache and Storage is partitioned by scenario. Each scenario is a first-class partition with full data isolation. Queries for one scenario never scan data belonging to another. A user works within a single active scenario at a time, eliminating conflicting writes or cross-scenario corruption.

As scenario count grows, query performance doesn't degrade. Each query stays scoped to its own slice of data. In legacy systems, every new scenario adds load to a shared memory pool, another manifestation of the in-memory tax that compounds with every user and every what-if.

Preventing Overload

Four design choices work together to prevent overload structurally rather than absorbing it with bigger hardware. At the data level, only the tables a specific application needs are loaded into Cache, so users are never competing for a single monolithic pool, and when new scenarios are created, the Storage Layer clones metadata pointers rather than data files, meaning I/O overhead grows only with actual data changes, not scenario count.

At the write level, saves flow from Cache to Storage asynchronously, insulating active query performance from background write operations. During a scenario run, saves to Storage are intentionally gated until the run completes, eliminating the I/O contention and race conditions that come from simultaneously serving an active compute run and absorbing a write for the same scenario.

The Bigger Picture: Open Architecture vs. Vendor Lock-In

There's a strategic dimension to this that goes beyond performance tuning.

Traditional planning systems are built on legacy, proprietary in-memory engines: closed systems that are slow to evolve, where innovation happens entirely at the vendor's discretion. The in-memory tax is a strategic dependency as much as a technical one. You're locked into a single vendor's roadmap, paying their price for every performance improvement.

A platform built on open-source technologies sits in a fundamentally different position. It benefits from a rapidly innovating ecosystem, a global community constantly pushing performance, scalability, and capability forward. Customers ride that momentum rather than waiting for a single vendor to prioritize their problem. That said, ecosystem innovation must be adopted deliberately, validated against real client use cases, tested for backward compatibility, and enabled only when it genuinely solves a problem worth solving.

The Bottom Line

Speed in planning is an architectural property, not a configuration option. The in-memory tax, the assumption that performance requires everything to live in RAM, constrains model size, scenario count, user concurrency, and decision velocity. Every year on a memory-bound system, that constraint compounds.

The architecture described here, two-layer storage, semantic scoping, hybrid scenario copies, partition-level isolation, and async write management, is what it looks like when speed and scale are engineered in from the start.

Get the architecture right. The fast decisions follow.

Read more

Decision Intelligence

No Destination Stays Still

May 5, 2026

Brooke Collins & Sonja Jones

read more

Optimization Science & Modeling

Why Optimization Needs Simulation

Apr 14, 2026

Ratnaji Vanga

read more

Planning & Forecasting

Teaching Supply Plans to See Around Corners

Apr 6, 2026

Ugo Rosolia

read more

Planning & Forecasting

Why Your Planning Software is Holding You Back

Apr 1, 2026

Deb Mohanty

read more

Planning & Forecasting

Why Your Planning System Should Think Like a Perishable

Mar 23, 2026

Brian Howard Dye

read more

Planning & Forecasting

The Modeling-Planning Divide Was Always a Technology Problem

Mar 19, 2026

Vish Oza & Deb Mohanty

read more

Leadership & Decision Culture

The Innovation Tax: Why Your Best Work Doesn't Compound

Feb 18, 2026

Brittany Elder

read more

Leadership & Decision Culture

What Sudoku Teaches Us About Enterprise Software

Feb 17, 2026

Akshat Jain

read more

Decision Intelligence

Taming the Toughest Problems in Transportation

Dec 18, 2025

Amit Hooda & Priyesh Kumar

read more

Leadership & Decision Culture

Why 30% of Packaged Food Never Reaches a Consumer

Dec 22, 2025

Srivatsan Kadambi Seshadri & Thilak Satya Sree

read more

Leadership & Decision Culture

How to Plan When Nothing Goes According to Plan

Dec 15, 2025

Dr. Nilendra Singh Pawar

read more

Architecture & Composability

Why We Fall Back to Heuristics

Nov 24, 2025

Frank Corrigan

read more

Architecture & Composability

What You Group is What You See

Nov 3, 2025

Frank Corrigan

read more

Architecture & Composability

The Cost of Curiosity

Sep 24, 2025

Brooke Collins

read more

Leadership & Decision Culture

Lyric Named a 2025 Gartner® Cool Vendor in Cross-Functional Supply Chain Technology

Sep 2, 2025

Sara Hoormann

read more

Leadership & Decision Culture

Built for Builders. Backed to Scale.

Aug 5, 2025

Ganesh Ramakrishna

read more

Architecture & Composability

Generative AI meets Time Series Forecasting

May 2, 2025

Deb Mohanty

read more

Architecture & Composability

The Dying Art of Supply Chain Modeling

Apr 15, 2025

Milind Kanetkar

read more

Leadership & Decision Culture

Tariffs, Trade Wars, and the AI Advantage: Why Fast Modeling Wins

Apr 7, 2025

Lyric Team | Prime Contributors - Laura Carpenter, Victoria Richmond, Saurav Sahay

read more

Architecture & Composability

Lyric Leverages NVIDIA cuOpt to Elevate Supply Chain AI

Mar 18, 2025

Sara Hoormann

read more

Architecture & Composability

The Technology Behind Modeling at Scale

Mar 14, 2025

Ganesh Ramakrishna

read more

Leadership & Decision Culture

Our Dream is to Make Every Supply Chain AI-First

Oct 18, 2023

Ganesh Ramakrishna

read more

Architecture & Composability

What Is a Feature Store Anyway?

Mar 14, 2024

Sara Hoormann

read more

Leadership & Decision Culture

Supply Chain AI Ain’t Easy

Feb 20, 2023

Ganesh Ramakrishna & Sara Hoormann

read more

Decision Intelligence

Four Ways to Improve Supply Chain Operations with Machine Learning

Jan 26, 2023

Vish Oza

read more

Architecture & Composability

Prediction is the New Visualization

May 30, 2024

Frank Corrigan

read more